Johnson Controls expects more of its data center customers to move away from on-premises chillers and opt for network-connected systems soon, Aaron Lewis, chief commercial officer for the company’s global data center solutions, says.

The company has been shipping its chillers with onboard connectivity for some time, but its data center customers have been opting to keep their systems on-premises as they work through security capabilities, Lewis told Facilities Dive last month at Data Center World.

“We’ve actually cleared that [security] hurdle,” Lewis said in an interview. “With the hyperscalers we’ve worked with, [security] has not been a problem…. We don’t really worry about traffic coming in and out — and that’s generally the highest concern.”

Where the data is stored and who owns it are other issues that have kept its data center customers from moving the data the chillers generate to the cloud.

“You get into IP discussions,” Lewis said. “I think they’re very interested in the data, but there’s not a good model that’s emerged yet to make it work for both sides. That’s going to come, though.”

The data that chillers generate is key to optimizing them for efficiency, Lewis said. That’s the case whether the system is on-premises or connected. But by having it connected, data center operators can get help from Johnson Controls, as the original equipment manufacturer, or a third-party specialist to monitor the system for optimal performance and prevent emergencies.

“When you connect into the cloud [you get] real-time observation so you can … check on the overall performance as it’s running,” he said. “We can see the problem before it happens, order the parts and be ready as soon as we need to get out there for a planned visit.”

The shortage of technicians magnifies the benefit of connected operations because it enables preventive maintenance, he said. “It’s much more expensive and much harder to roll a truck in an emergency” than prevent problems through connected monitoring, he said.

The company is talking with a large customer about beta testing a connected chiller system. If it goes forward, more customers could decide to activate the onboard connectivity, Lewis said.

“It just needs that one person,” he said. “The market needs one person to accept it. People will say, ‘Oh, it’s OK for those guys to put it in their [service level agreements]. The hyperscalers are supporting it.’”

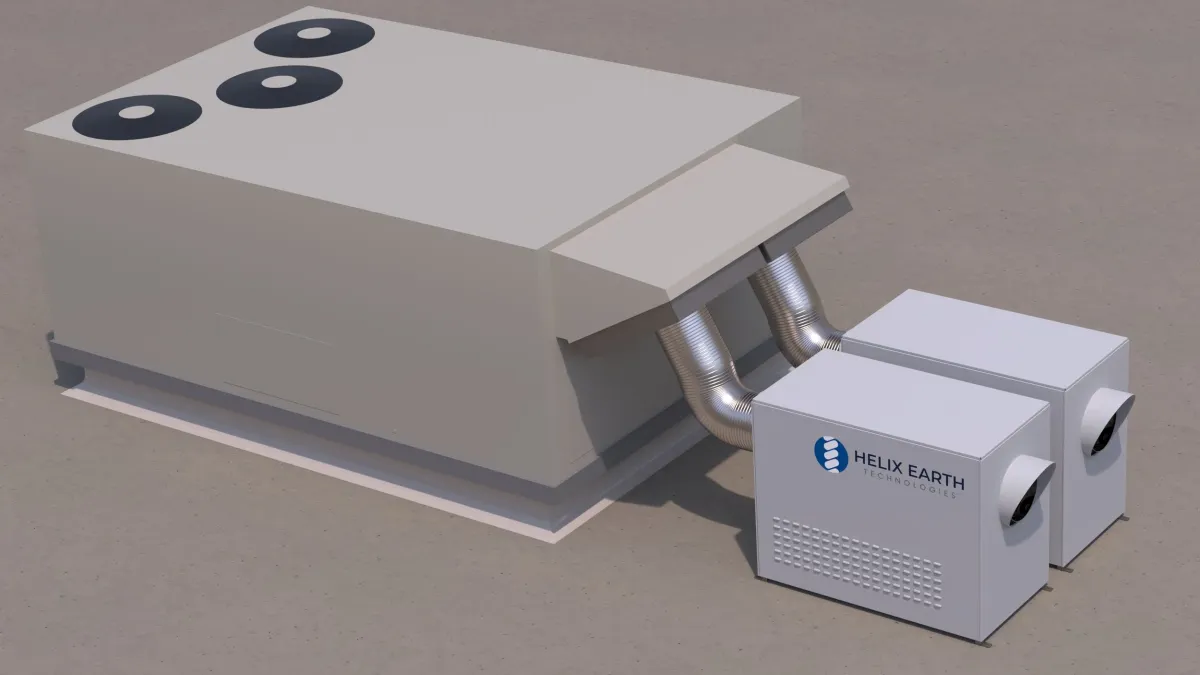

Late last year the company launched what it calls a scalable coolant distribution unit system that can serve data center blocks ranging from 500 kilowatts to 10 megawatts. The company calls its Silent-Aire CDU system an efficient and flexible alternative to traditional data center system.

To stay in front of the liquid cooling wave that’s expected to dominate the data center space going forward, the company last year made a strategic investment in liquid cooling provider Accelsius. In the company’s fourth-quarter earnings call in November, Johnson Controls CEO Joakim Weidemanis called the Accelsius technology integral to the company’s goal of “deliver[ing] a comprehensive and integrated portfolio that addresses the full thermal management spectrum, from chip to ambient.”

The company’s purpose-built data center chillers are 40% more energy efficient than what data center operators were using two years ago, Katie McGinty, Johnson Controls’ vice president and chief sustainability and external relations officer, told Facilities Dive at the conference. “It was basically repurposed room space cooling systems” that were being used before, she said.

Johnson Controls has been making chillers for 100 years and learned how to make them small and quiet by supplying the cooling systems for U.S. Navy submarines — “where space is at a premium, energy is at a premium and reducing noise is at a premium,” she said.

The company’s chillers use 44% less space than the systems that data centers were using before, she said. Along with their evolution into quieter and more efficient machines, she said, their compact size could help address the public’s concern about locating new data centers in their communities since the property footprint can be smaller and the facility will generate less noise.

“We’ve all heard the concerns [about] the growth of data centers,” she said.

The company’s push to capture and redeploy waste heat that’s generated could also help allay community concerns as data centers meet local bring-your-own-power requirements, she said. In some cases, power generation is as high as 50% inefficient, creating what McGinty calls a rich thermal resource that can be leveraged into additional on-site power generation.

“We can actually put that heat to work and that cuts the need of the electricity for the chillers, so it’s like a game changer,” she said.

The computer chips are an additional thermal resource, she said. “On the back end [of the computer systems] you’re looking at, like, 80 degrees,” she said. “If we slap a heat pump on the back end of that, then that thermal problem becomes a high-quality energy resource that can be used in [data center operations] or in the neighboring community or at a local hospital. ‘Hey, how would you like free heat?’ We’re not just taking the nail ... and seeing if there’s a bigger hammer; we’re trying to think about it differently, to say, ‘Is there an opportunity we can unlock?’”